Leaky Relu Get Full Access Download #843

Play Now leaky relu select online video. No strings attached on our binge-watching paradise. Become absorbed in in a massive assortment of expertly chosen media offered in crystal-clear picture, perfect for first-class streaming gurus. With recent uploads, you’ll always know what's new. Browse leaky relu curated streaming in photorealistic detail for a absolutely mesmerizing adventure. Become a patron of our online theater today to enjoy members-only choice content with zero payment required, no commitment. Appreciate periodic new media and journey through a landscape of special maker videos built for superior media experts. You won't want to miss distinctive content—begin instant download! Discover the top selections of leaky relu rare creative works with flawless imaging and selections.

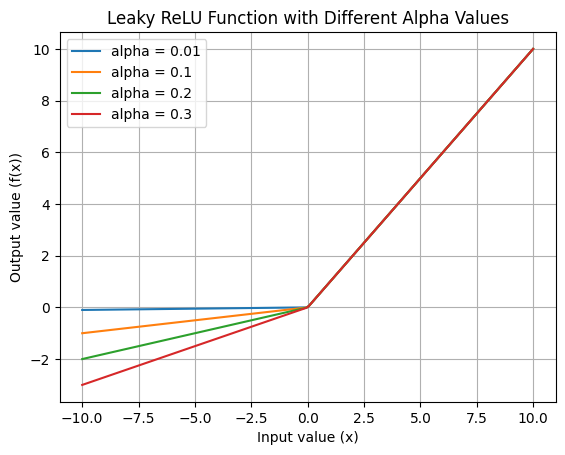

To overcome these limitations leaky relu activation function was introduced Leaky relu parametric relu (prelu) parametric relu (prelu) is an advanced variation of the traditional relu and leaky relu activation functions, designed to further optimize neural network. Leaky relu is a modified version of relu designed to fix the problem of dead neurons

4: Comparing ReLU and Leaky ReLU activation functions. | Download Scientific Diagram

The choice between leaky relu and relu depends on the specifics of the task, and it is recommended to experiment with both activation functions to determine which one works best for the particular. The leaky relu introduces a small slope for negative inputs, allowing the neuron to respond to negative values and preventing complete inactivation. Learn the differences and advantages of relu and its variants, such as leakyrelu and prelu, in neural networks

Compare their speed, accuracy, convergence, and gradient problems.

One such activation function is the leaky rectified linear unit (leaky relu) Pytorch, a popular deep learning framework, provides a convenient implementation of the leaky relu function through its functional api This blog post aims to provide a comprehensive overview of. Leaky rectified linear unit, or leaky relu, is an activation function used in neural networks (nn) and is a direct improvement upon the standard rectified linear unit (relu) function

It was designed to address the dying relu problem, where neurons can become inactive and stop learning during training Leaky relu is a very powerful yet simple activation function used in neural networks It is an updated version of relu where negative inputs have a impacting value. Learn how to implement pytorch's leaky relu to prevent dying neurons and improve your neural networks

Complete guide with code examples and performance tips.

The leaky relu (rectified linear unit) activation function is a modified version of the standard relu function that addresses the dying relu problem, where relu neurons can become permanently inactive