Leakyrelu Activation Function Private Collection Updates #810

Begin Immediately leakyrelu activation function hand-selected online video. No subscription fees on our content hub. Become one with the story in a massive assortment of themed playlists exhibited in 4K resolution, a dream come true for superior viewing gurus. With brand-new content, you’ll always stay current. Explore leakyrelu activation function themed streaming in life-like picture quality for a truly enthralling experience. Sign up today with our digital stage today to look at solely available premium media with no payment needed, no recurring fees. Appreciate periodic new media and venture into a collection of rare creative works engineered for premium media admirers. You have to watch never-before-seen footage—download fast now! Get the premium experience of leakyrelu activation function one-of-a-kind creator videos with rich colors and hand-picked favorites.

Output values of the leaky relu function It was designed to address the dying relu problem, where neurons can become inactive and stop learning during training Interpretation leaky relu graph for positive values of x (x > 0)

Leaky ReLU Activation Function - Leaky Rectified Linear Unit function - Deep Learning - #Moein

The function behaves like the standard relu Leaky rectified linear unit, or leaky relu, is an activation function used in neural networks (nn) and is a direct improvement upon the standard rectified linear unit (relu) function The output increases linearly, following the equation f (x) = x, resulting in a straight line with a slope of 1.

At least on tensorflow of version 2.3.0.dev20200515, leakyrelu activation with arbitrary alpha parameter can be used as an activation parameter of the dense layers:

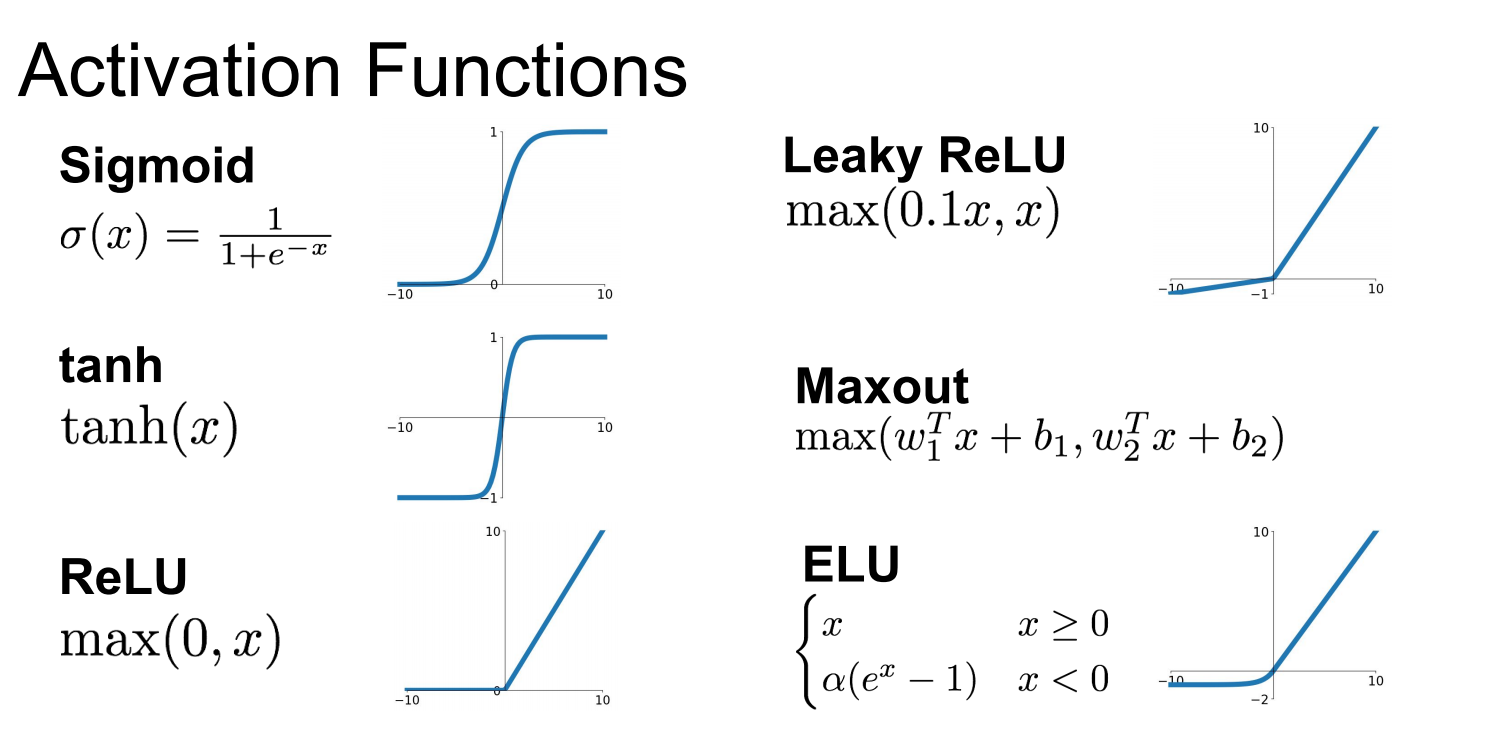

Common activation functions in the realm of neural networks, several activation functions are commonly used The identity function f (x)= x is a basic linear activation, unbounded in its. How do you use leakyrelu as an activation function in sequence dnn in keras If i want to write something similar to

Model = sequential () model.add (dense (90, activation='leakyrelu')) what is. Keras documentationleaky version of a rectified linear unit activation layer This layer allows a small gradient when the unit is not active Hence the right way to use leakyrelu in keras, is to provide the activation function to preceding layer as identity function and use leakyrelu layer to calculate the output

It will be well demonstrated by an example

In this example, the article tries to predict diabetes in a patient using neural networks. What is leakyrelu activation function leakyrelu is a popular activation function that is often used in deep learning models, particularly in convolutional neural networks (cnns) and generative adversarial networks (gans) In cnns, the leakyrelu activation function can be used in the convolutional layers to learn features from the input data. We use the prelu activation function to overcome the shortcomings of relu and leakyrelu activation functions

Prelu offers an increase in the accuracy of the model. The leaky rectified linear unit (relu) activation operation performs a nonlinear threshold operation, where any input value less than zero is multiplied by a fixed scale factor.